The rapid evolution of artificial intelligence has birthed a complex new vocabulary. To navigate this landscape, you need to understand the mechanics and the metaphors driving the industry.

The Core Models

At the heart of the boom are Large Language Models (LLMs). These are deep neural networks—algorithmic structures inspired by the human brain—that map relationships between billions of words. Popular assistants like ChatGPT, Claude, Gemini, and Microsoft Copilot use these models to predict the most likely response to a prompt.

While LLMs handle text, diffusion models power creative AI for images and music by learning to “reverse” data destruction. For realistic media like deepfakes, Generative Adversarial Networks (GANs) use a two-model “contest” where one generates content and the other tries to spot the fake, pushing both to improve.

How AI Thinks and Learns

AI doesn’t just “know” things; it undergoes intensive processes:

- Deep Learning: A subset of machine learning that uses multi-layered networks to identify patterns without human intervention.

- Fine-tuning: Taking a pre-trained model and giving it specialized data to excel at a specific task.

- Distillation: Training a smaller “student” model to mimic a massive “teacher” model, resulting in faster, more efficient tools.

- Chain of Thought: A reasoning technique where the AI breaks complex problems into intermediate steps, improving accuracy in logic and coding.

Infrastructure and Execution

The physical side of AI is defined by compute—the raw processing power provided by GPUs and specialized chips. To handle massive workloads, developers use parallelization, performing thousands of calculations simultaneously.

When you interact with an AI, you are witnessing inference—the model using its training to generate an answer. To speed this up, memory caching saves previous calculations to reduce repetitive labor. Developers connect these systems using API endpoints, which act as digital “buttons” that allow different software programs to talk to one another.

The Future: Agents and AGI

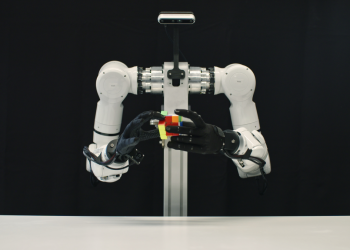

We are moving from chatbots to AI agents, autonomous systems that can book flights or file expenses. Specialized coding agents can even write and debug software independently.

The ultimate goal remains Artificial General Intelligence (AGI). While OpenAI and Google DeepMind define it slightly differently, it generally refers to a system that can outperform humans at most cognitive or economically valuable tasks. However, we still face the hurdle of hallucinations, where models confidently generate false information due to gaps in their training data.